Secure AI - Avoid Getting Famous on DefCon

At this year’s DefCon, the world's largest security conference, one theme dominated the conversation: AI systems are already under attack. From Agentic AI agents exposing sensitive data to misconfigured Model Context Protocol (MCP) servers granting unauthorized access, to LLM-powered apps tricked by prompt injections - DefCon made it brutally clear that vulnerabilities in AI aren't theoretical. They're here, and they're being actively exploited.

Does that mean you should halt your Agentic AI innovation? Absolutely not. But it is a powerful call to action on the awareness of the multiple threats in Agentic AI ecosystems. To truly unlock the power of data-powered innovation and agentic AI, organizations must weave DevSecOps principles into the Golden Path - turning security from a burdensome afterthought into a seamless, automated foundation for safe, rapid innovation.

Why Security-First AI Matters

AI brings unprecedented opportunities, but it also amplifies familiar risks while introducing genuinely new ones. The convergence of data pipelines, complex model ecosystems, and autonomous agents has created an expanded, more fragile attack surface:

- Supply Chain Vulnerabilities: Just as the NPM ecosystem has shown, a single compromised library can ripple through thousands of applications. AI projects depend on frameworks like LangChain, HuggingFace, PyTorch, TensorFlow - all containing potential weak links.

- MCP and Integration Risks: MCP servers and agent frameworks connect AI models to APIs and tools. If a connector lacks proper authorization, an agent might gain access to sensitive data or perform unintended actions.

- AI-Specific Exploits: From prompt injection to adversarial triggers and data poisoning, Cross-Agent Privilege Escalation, attackers now have new vectors to manipulate AI behavior in ways traditional testing never accounted for.

The attack surface is larger than ever. That’s why the answer isn’t more manual reviews or slowing down delivery. It’s embedding security directly into the developer workflow.

Two tools are essential for mastering this new security landscape: the Software Bill of Materials (SBOM) and robust Role-Based Access Control (RBAC).

SBOMs: Transparency and Trust for AI Projects

An SBOM is a complete inventory of every library, framework, and dependency that makes up a system. Just as a BOM is vital in manufacturing, the SBOM is becoming the key resource for the Data and AI world. For AI, SBOMs provide critical visibility across:

- Data Products (ingestion pipelines, storage, transformations).

- Model Training & Inference (PyTorch, TensorFlow, scikit-learn).

- Agentic AI Systems (like those built with LangChain and MCP connectors).

With SBOMs, organizations gain a clear map of dependencies. When a new vulnerability (like the next NPM security crisis) is disclosed, you can instantly check exposure across all your AI projects. Teams without an automated, up-to-date SBOM are simply left guessing.

{

"bomFormat": "CycloneDX",

"specVersion": "1.4",

"version": 1,

"components": [

{ "type": "library", "name": "langchain", "version": "0.1.12" },

{ "type": "library", "name": "openai", "version": "1.10.0" },

{ "type": "library", "name": "fastapi", "version": "0.103.2" },

{ "type": "library", "name": "uvicorn", "version": "0.24.0" },

{ "type": "library", "name": "pydantic", "version": "2.5.2" }

]

}

This manifest provides transparency. If a vulnerability emerges in FastAPI 0.103.2, security teams know immediately which AI agents are at risk.

Role-Based Access: Controlling What Agents Can Do

While SBOMs protect against vulnerable dependencies, role-based access (RBAC) protects against misuse of capabilities when AI agents connect to MCP servers and tools.

A lack of RBAC is a recipe for disaster. If an agent has blanket permissions to query production databases or call sensitive APIs, an attacker only needs a successful prompt injection to trigger a massive data leak or system compromise.

By enforcing RBAC:

- Each AI agent only has the minimum privileges required.

- Access to tools and data sources is segmented by role and scope.

- Unauthorised tool usage is blocked by design.

For example, a customer support agent should only be allowed to Read from a knowledge base and Create support tickets. It should be blocked from deleting data, issuing refunds, or accessing HR systems - no matter how cleverly it’s prompted.

The Golden Path: Security Without Friction

The Golden Path in platform engineering is about opinionated, paved roads that make it easy for developers to move fast while adhering to best practices. For security, this means automating guardrails, so compliance is the easiest choice.

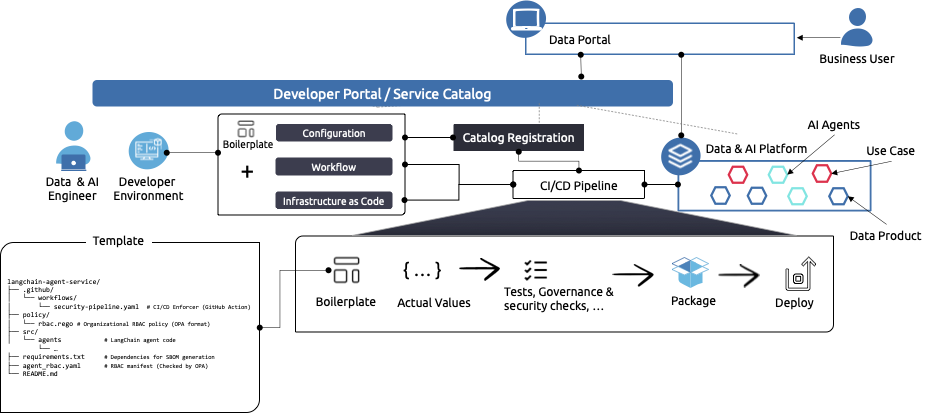

The security journey starts the moment a new project is created, using a developer portal like Backstage. Instead of manually configuring security tools, developers choose an approved Golden Path Template for their “LangChain Agentic Service.”

This template isn’t just a starting structure; it’s a security guarantor. It automatically scaffolds the project with:

- Secure Framework Defaults: A LangChain boilerplate that enforces secure settings for tool execution and prompt handling.

- SBOM Automation: A requirements.txtfile pre-populated with known-secure dependencies, along with a definition in the project's folder that triggers an SBOM generation GitHub Action upon creation.

- RBAC Policy Stubs: Default files defining the minimum required permissions (agent_rbac.yaml) for the LangChain agent to connect to an MCP server, ensuring the agent adheres to the principle of least privilege from day one. Crucially, the template includes a policy/rbac.rego file containing the organizational RBAC rules.

Why This Matters for Data & AI Innovation

For organizations embracing Data Mesh, Data Products, and Agentic AI, this dual approach of SBOM + RBAC provides:

- Transparency and trust in dependencies.

- Control and governance over agent actions.

- Confidence to innovate without risking misuse or breaches.

By embedding DevSecOps into the Golden Path, enterprises not only avoid vulnerabilities but also prevent agents from “going rogue.”

Conclusion: Avoid Becoming the Next DefCon Demo

DefCon made one thing terrifyingly clear: AI security is already a battleground. Organizations that fail to act now risk becoming the next highly visible case study in how not to adopt AI.

The solution is not to slow down innovation but to secure the Golden Path. By integrating automated SBOM creation, enforced RBAC, and CI/CD checks into the developer workflow, enterprises not only avoid classic vulnerabilities but also prevent their agents from “going rogue.”.

Start Innovating Now:

- Automate SBOMs in Every Pipeline: Generate and scan SBOMs in your CI/CD workflow. Make vulnerability management a default, not an option.

- Adopt Security-First Templates with RBAC: Use Backstage templates to scaffold AI projects with RBAC policies for MCP tools enforced by default.

- Shift Security Left for AI: Validate dependencies, RBAC policies, and agent behaviors early. Don’t wait until deployment to discover vulnerabilities.

(this article also appeared in DPIR 11)